CPU vs NPU: The Shift to Specialized Silicon in 2026

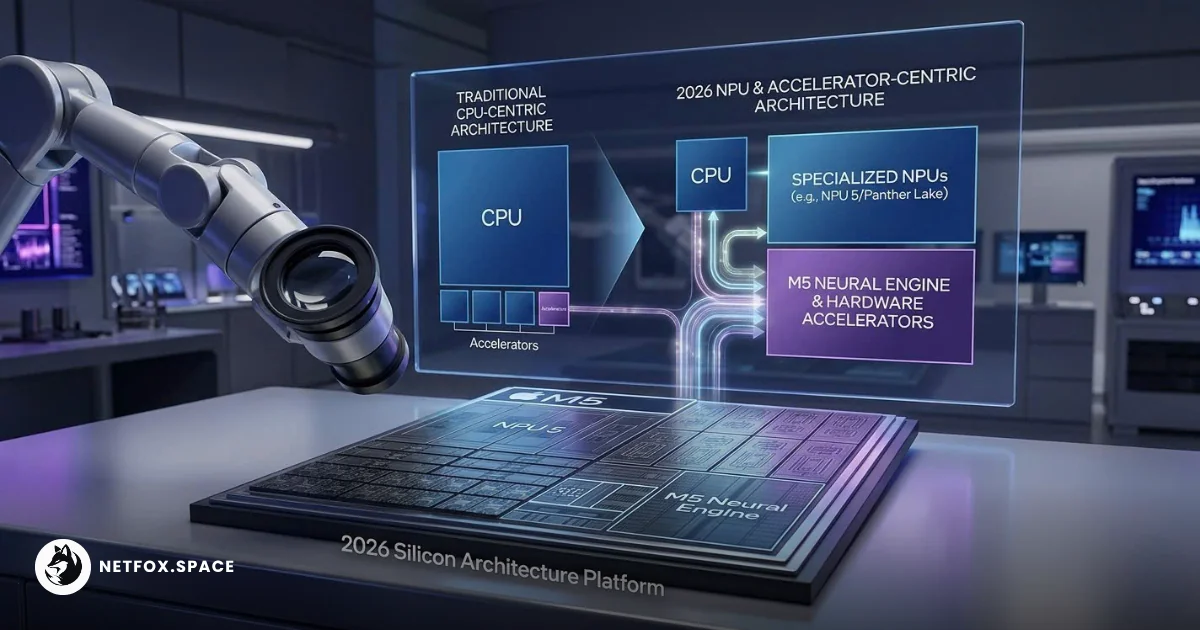

For decades, the Central Processing Unit (CPU) was the undisputed arbiter of performance. In 2026, however, the launch of Apple’s M5 family and Intel’s Panther Lake series confirms a fundamental pivot: the CPU is now a manager, while specialized silicon—NPUs, TPUs, and integrated accelerators—performs the heavy lifting.

The move from general-purpose cycles to domain-specific acceleration

In classical computing, the CPU was designed for versatility. Its architecture excels at sequential logic and complex branching—tasks like managing an operating system or running a web browser. However, the rise of "agentic AI" and large language models (LLMs) has exposed the CPU’s inefficiency in handling the massive matrix multiplications required for modern inference.

The "neural processing unit" is being pushed as the next big thing for "AI PCs" and "AI smartphones," but they won’t eliminate the need for cloud-based AI

The "neural processing unit" is being pushed as the next big thing for "AI PCs" and "AI smartphones," but they won’t eliminate the need for cloud-based AI

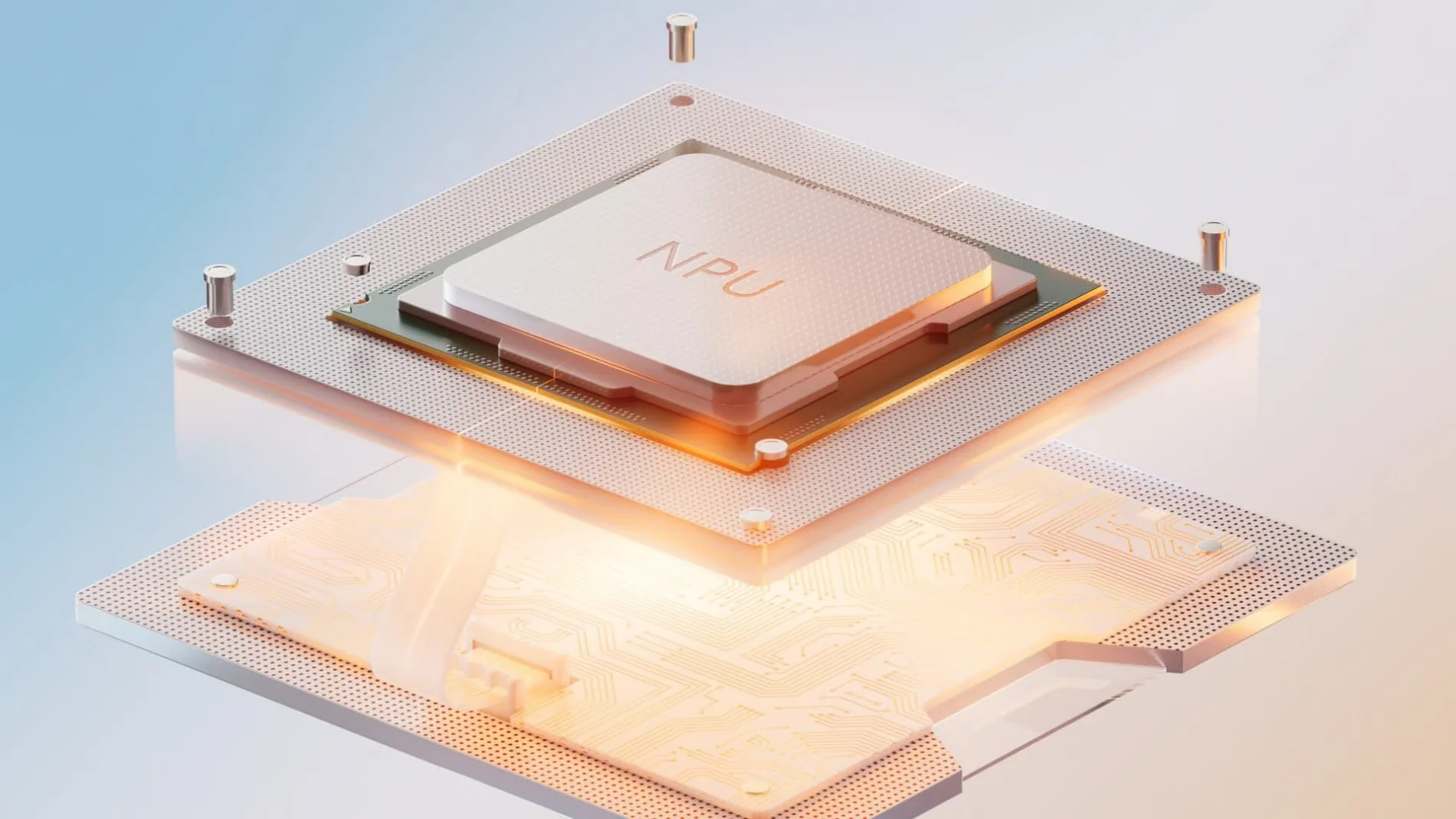

This has led to a "offloading" model. Instead of a CPU laboring through billions of mathematical operations, it identifies the task and hands it to a specialized block: the Neural Processing Unit (NPU) or a Tensor Processing Unit (TPU). This shift is not just about speed; it is about energy density. A specialized accelerator can often achieve better efficiency in performance-per-watt for specific math kernels compared to a general-purpose CPU.

Apple M5 and Intel Panther Lake: Architectural focus on the NPU

The hardware releases of early 2026 showcase two different philosophies in this specialized era. The specifications for the M5 Pro and Max reveal a "Fusion Architecture" that utilizes dual-die 3nm silicon. Most notably, Apple has integrated a "Neural Accelerator" directly into every GPU core, effectively blurring the line between graphics rendering and AI throughput. This allows for a reported 4x boost in AI performance over the previous generation without requiring a massive increase in physical chip size.

Crafted with Apple's new Unified Architecture, the M5 Pro and M5 Max boast an advanced CPU, next-generation GPU with Neural Accelerators, and higher unified memory bandwidth, significantly boosting AI computing capabilities.

Crafted with Apple's new Unified Architecture, the M5 Pro and M5 Max boast an advanced CPU, next-generation GPU with Neural Accelerators, and higher unified memory bandwidth, significantly boosting AI computing capabilities.

Intel’s approach with the Panther Lake series relies on its new 18A process node to package a highly efficient NPU 5. Delivering roughly 50 TOPS (Tera Operations Per Second) of dedicated AI performance, the Panther Lake NPU is designed to handle background tasks—like real-time video noise cancellation or local LLM reasoning—leaving the CPU’s performance cores free for high-priority user tasks.

How offloading changes the developer's execution model

For practitioners, this hardware shift mandates a new way of writing software. In the past, optimizing an application meant making it run faster on a CPU. Today, developers must write code that can target specific hardware abstraction layers.

When an application runs on an M5 or Panther Lake system, the execution flow often follows this path:

-

CPU: Orchestrates the application logic and prepares the data.

-

GPU/NPU: Receives the "compute graph" and executes the heavy parallel math.

-

Shared Memory: Uses high-bandwidth unified memory to ensure that moving data between these units doesn't create a bottleneck.

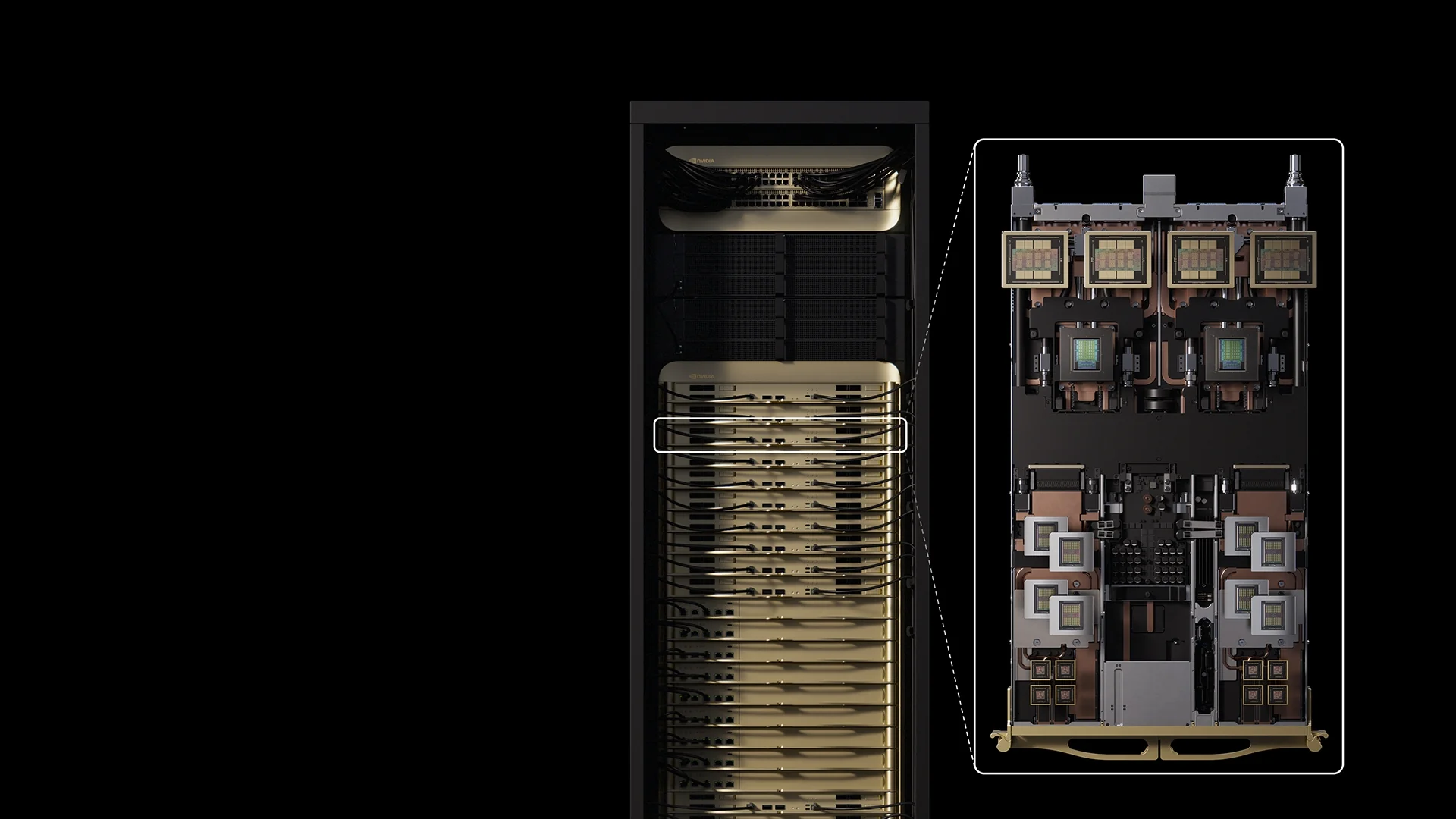

The NVIDIA Rubin CPX GPU is purpose-built to handle million-token coding and generative video applications.

The NVIDIA Rubin CPX GPU is purpose-built to handle million-token coding and generative video applications.

This model is visible in recent advances in data center hardware, where NVIDIA’s Vera Rubin architecture utilizes a BlueField DPU (Data Processing Unit) to offload networking and storage tasks, ensuring the GPU is never starved of data.

Limitations of specialized silicon: The programmability tradeoff

The primary risk of this specialization is architectural rigidity. While a CPU can run any software, an NPU or TPU is an Application-Specific Integrated Circuit (ASIC). If the underlying AI algorithms change—shifting away from the matrix operations current chips are optimized for—these specialized blocks risk becoming "electronic bricks."

Furthermore, as chips become more specialized, the software stack becomes more fragmented. A model optimized for Apple's Neural Engine may not perform identically on Intel's NPU without significant re-tuning. While this specialization allows for the "agentic" capabilities of 2026—enabling devices to reason and act locally rather than relying on the cloud—it places a heavier burden on developers to maintain compatibility across a diversifying silicon landscape.

Comments (0)

Please login to comment

Sign in to share your thoughts and connect with the community

Loading...