Google Translate Adds AI Pronunciation Practice Tool

Google is celebrating the 20th anniversary of Google Translate by shifting the service from a passive reference tool into an active learning platform. The company has introduced a new "Practice" feature that utilizes artificial intelligence to provide real-time feedback on a user’s pronunciation and enunciation, marking a significant step in Google's 2026 strategy to embed functional AI across its legacy products.

Interactive phonetics and scoring arrive in the Translate 'Practice' menu

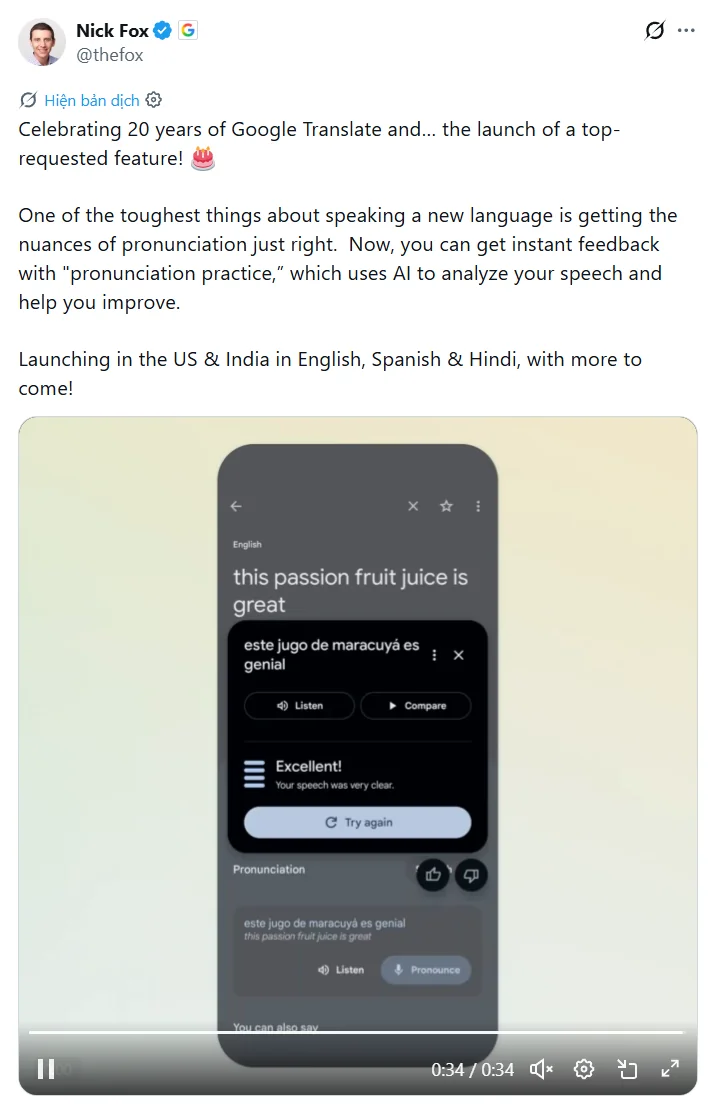

The new pronunciation tool allows users to go beyond simply reading a translation. When a user translates a word or phrase, a new "Practice" menu appears, housing a "Pronounce" button. This interface displays the phonetic breakdown of the text, prompting the user to speak the phrase into their device's microphone.

The underlying AI model analyzes the audio input against a canonical phonetic transcript, providing an immediate score. If the user’s speech is unclear or lacks proper inflection, the app provides specific feedback, such as noting that "some sounds were a little unclear." Currently, this feature is rolling out to users in the United States and India, with initial support for English, Spanish, and Hindi.

Celebrating 20 years of Google Translate and… the launch of a top-requested feature!

Celebrating 20 years of Google Translate and… the launch of a top-requested feature!

This update reflects a broader trend seen when Google integrates Gemini AI into other services: the move toward "Utility AI." Rather than just generating text, the system is performing a comparison task—measuring human performance against a verified linguistic baseline to provide a pedagogical benefit.

'Ask YouTube' brings conversational LLM search to video discovery

Parallel to the Translate update, Google is expanding its AI experiments into video discovery. A new feature called "Ask YouTube" is currently being tested on the YouTube Labs page. Available to Premium subscribers in the U.S. who are 18 and older, this feature adds a conversational layer to the traditional search bar.

Unlike standard keyword searches, "Ask YouTube" allows users to pose complex questions, such as requesting a three-day road trip itinerary. The system then generates a comprehensive response that synthesizes information from various videos alongside text-based summaries. This experiment, which is scheduled to run through June 8, 2026, represents an attempt to solve the "discovery gap" where relevant information buried deep within long-form video content is often missed by standard algorithms.

As noted in early hands-on testing of the feature, the tool can provide specific mission summaries with timestamps for historical queries. However, the system is not yet a replacement for traditional search; in some instances, it reverts to a standard video list if the query is too simple or outside the LLM’s current confidence threshold.

Implementation constraints and the risk of AI hallucinations in search

While the Translate feature operates within the relatively safe constraints of phonetic alignment, the "Ask YouTube" feature faces the well-documented challenge of AI hallucinations. Because the tool must "watch" and summarize video content, there is a risk of the AI misinterpreting visual or audio cues from creators.

Initial reports on the tool's accuracy highlighted instances where the AI yielded factually inaccurate information regarding specific hardware, such as the Steam Controller. This underscores a critical limitation for operators and users: conversational AI is currently better suited for low-stakes utility—like pronunciation practice—than for serving as a primary source of technical or factual truth.

For Google, these rollouts serve two purposes. They provide a massive live dataset for refining Large Language Models (LLMs) in multi-modal environments, and they attempt to normalize AI interaction for a user base that has grown increasingly skeptical of "AI-generated slop." By tying these features to established, high-utility apps like Translate and YouTube, Google is betting that practical value will eventually outweigh the friction of occasional inaccuracy.

Comments (0)

Please login to comment

Sign in to share your thoughts and connect with the community

Loading...