Xiaomi MiMo V2.5 Pro Leads GDPval-AA Agentic Benchmarks

Xiaomi’s MiMo V2.5 Pro has secured a leading position on the GDPval-AA benchmark, scoring 1578 and outperforming established peers in agentic real-world work tasks. The model’s release coincides with a planned shift to open weights, potentially placing it at the top of the open-weights intelligence hierarchy.

MiMo V2.5 Pro establishes a new baseline for agentic task performance

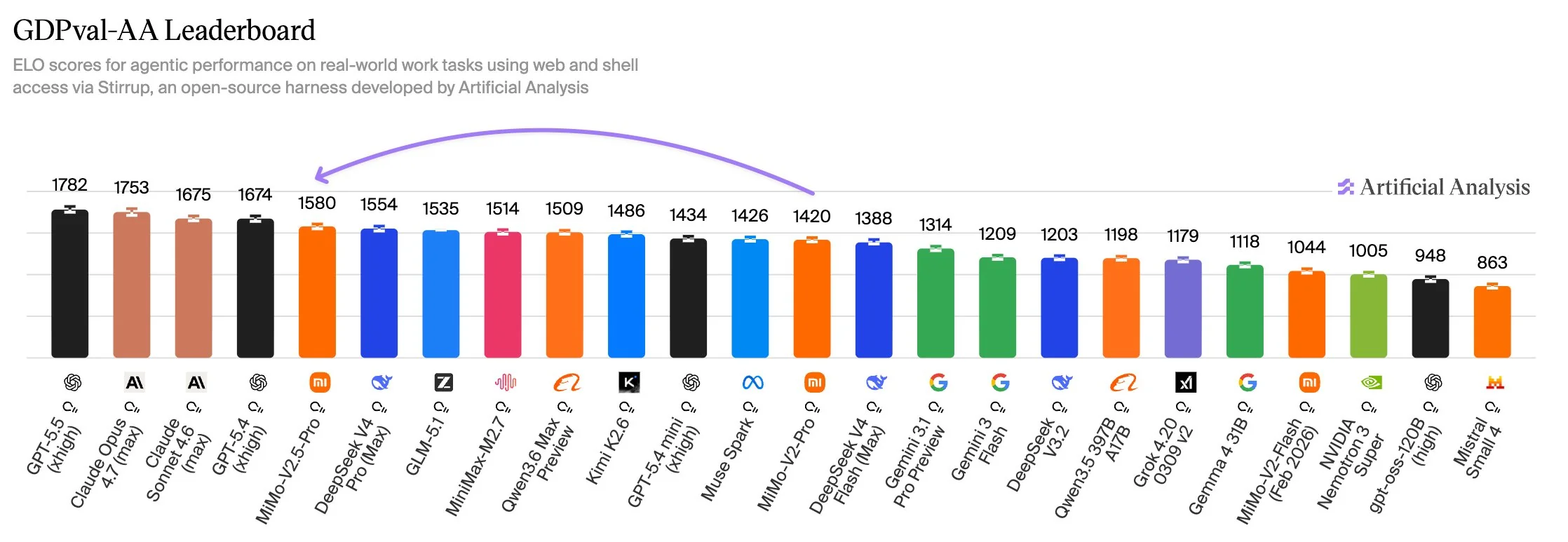

The recent performance data from Artificial Analysis places MiMo V2.5 Pro at the top of the GDPval-AA index, a benchmark specifically designed to measure how effectively AI models handle multi-step, agentic workflows. With a score of 1578, the model leads a competitive field that includes DeepSeek V4 Pro (1554), GLM-5.1 (1535), and Moonshot’s Kimi K2.6 (1484).

MiMo V2.5 Pro leads its peer group on agentic tasks. The model scores 1578 on GDPval-AA, and places it in the top tier for real-world work tasks among recent releases

MiMo V2.5 Pro leads its peer group on agentic tasks. The model scores 1578 on GDPval-AA, and places it in the top tier for real-world work tasks among recent releases

This advancement represents a focused iterative improvement by Xiaomi. The V2.5 Pro arrived just over a month after the March 19, 2026, release of its predecessor, MiMo V2 Pro. In that window, the developers achieved an 11% increase in instruction following (IFBench) and a 6% gain in reasoning (HLE). However, the gains are not uniform across all metrics; the model saw a marginal regression in critical reasoning (CritPt), falling from 5% to 4%, suggesting that while its ability to follow complex paths has improved, its underlying verification logic may be under strain.

The model’s ranking at 54 on the broader Intelligence Index ties it with Kimi K2.6. When weights are released—a move Xiaomi has publicly signaled as "soon"—it would technically become the highest-ranked open-weights model currently available, marginally ahead of the DeepSeek V4 Pro.

Architectural tradeoffs and the cost of token efficiency

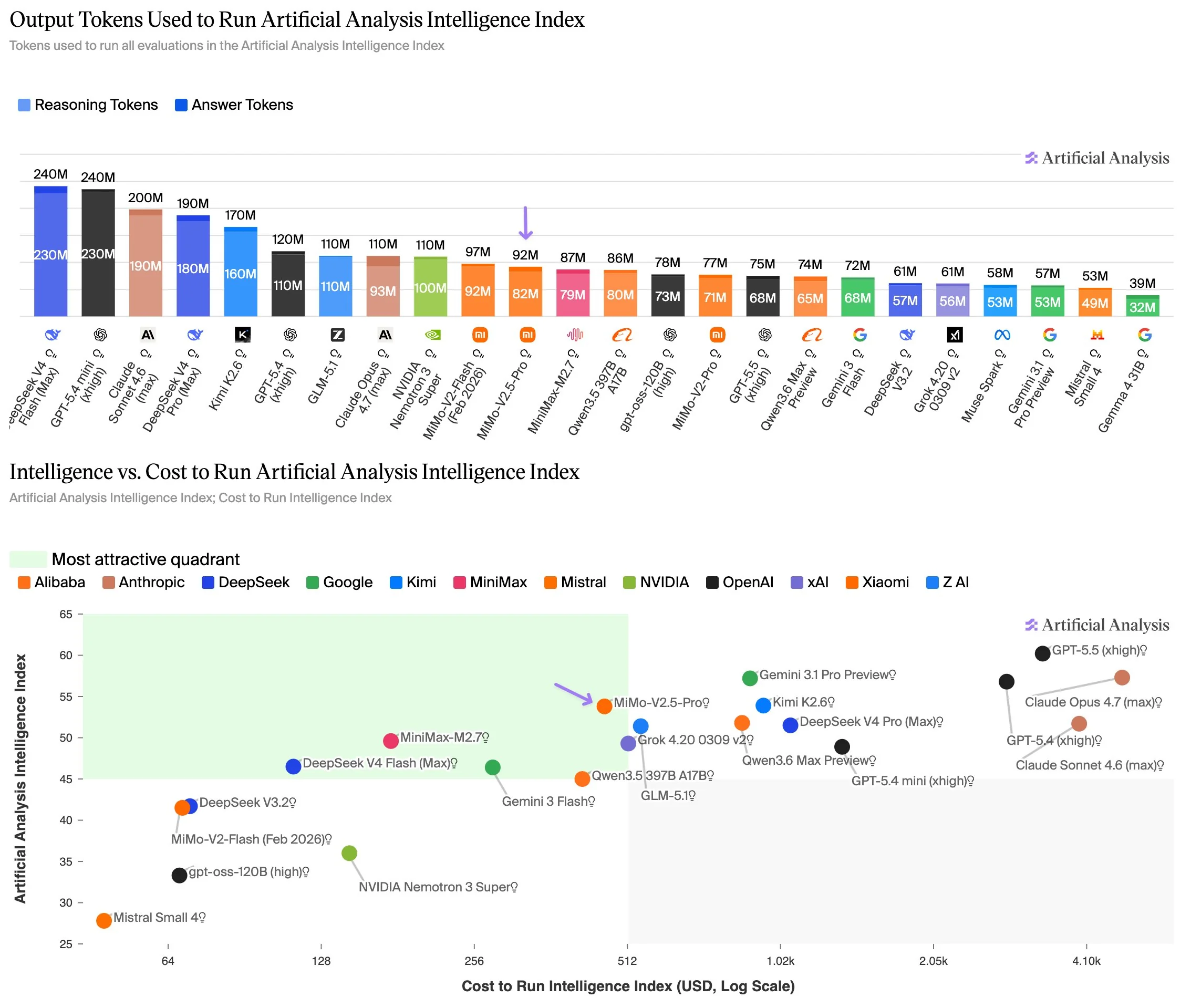

MiMo V2.5 Pro utilizes a Mixture-of-Experts (MoE) architecture with 1 trillion total parameters, though only 42 billion are active during any single inference step. This design allows the model to maintain a 1-million-token context window while remaining on the "Pareto frontier" of the Intelligence vs. Cost index.

MiMo V2.5 Pro is considerably more token efficient than models in the same Intelligence tier

MiMo V2.5 Pro is considerably more token efficient than models in the same Intelligence tier

The economic breakdown of the model's API usage reveals a pricing structure of $1.00 per million input tokens and $3.00 per million output tokens. In practical terms, running the standard Artificial Analysis Intelligence Index cost approximately $462 on MiMo V2.5 Pro, significantly lower than the $948 required for Kimi K2.6 and the $544 for GLM 5.1.

However, this cost-efficiency is balanced by an increase in resource consumption relative to its own lineage. The V2.5 Pro used approximately 92 million output tokens to complete the intelligence index, a 19% increase over the 77 million tokens used by the V2 Pro. While it remains more efficient than Kimi K2.6 (170M tokens) and GLM 5.1 (110M), the trend suggests that Xiaomi is trading higher token density for its improved agentic scores.

Coding challenge logs reveal a "brittle" agentic strategy

While benchmarks suggest superior agency, real-world programming challenges provide a more nuanced view of how MiMo handles dynamic environments. In the recent Word Gem Puzzle coding contest, the previous MiMo V2 Pro finished in second place, trailing Kimi K2.6 but outperforming frontier models like GPT-5.5.

Detailed move logs from the contest highlight a specific implementation constraint. While Kimi K2.6 utilized an "aggressive greedy loop" to actively slide tiles and solve puzzles, MiMo’s strategy was effectively a "static scanner." The model did not actually move tiles during the challenge; instead, it scanned the initial grid for existing long-form words and claimed them in a single batch.

Breakdown of individual evaluation results for MiMo V2.5 Pro

Breakdown of individual evaluation results for MiMo V2.5 Pro

This approach proved highly effective on grids where seed words remained intact but failed entirely on larger, more scrambled boards where active tile manipulation was required. For operators, this indicates that MiMo’s high agentic scores may stem from high-speed pattern recognition and execution rather than robust, adaptive problem-solving. It excels when the "path" is visible in the initial state but may struggle in highly fluid environments compared to models with more active "greedy" heuristics.

Factual accuracy regressions and the hallucination gap

Despite the gains in instruction following, MiMo V2.5 Pro shows signs of regression in factual reliability. On the AA-Omniscience Index—a measure of factual accuracy and hallucination—the model’s score dropped to 4, down from the V2 Pro’s score of 5.

The analysis of the model's outputs showed a hallucination rate of 25%, paired with a relatively low accuracy rate of 23%. This suggests that while the model is less likely to "invent" facts than some smaller peers, it frequently fails to retrieve the correct information, leading to a "low-confidence" performance profile in knowledge-heavy tasks.

Compared to proprietary frontier models, MiMo V2.5 Pro remains a tool primarily suited for structured agentic tasks where the environment is well-defined and the penalty for a lack of dynamic adaptation is low. The upcoming release of its weights will be a critical test for the open-weights community, determining if Xiaomi’s specific "static" agentic optimization can be fine-tuned into a more resilient general-purpose solver.

Comments (0)

Please login to comment

Sign in to share your thoughts and connect with the community

Loading...